The Web gazes also into you

Beware that, when fighting monsters, you yourself do not become a monster

In the era when I started making websites for no particular reason, we quickly realized that it was unsatisfying because publishing a website offered little feedback. We tried a few things. You might put your email address on the page, and perhaps someone would send you a note. You could put a form on your website to store a remark in a database reflected by the site itself (which we called a “guest book” before the concept lost all its quaint charm and collapsed into “the comments”). These systems in most their basic form all fell out of fashion because they attracted spam.

At the very least, you wanted to know if anyone at all had even seen your project. For this purpose you could add a hit counter. A simple number at the bottom of your page, your server would increment it each time it served a request. Today, automated traffic renders such a naive metric all but useless. In quieter times, the count offered a reasonable estimate of readership, but it was fraught. What you really wanted to know was how many people had been to your website, which requires various underhanded identification techniques. Otherwise, it requires you to ask people to identify themselves.

The web is now a fantastic mess of everyone wanting you to log into everything for myriad underhanded reasons pertaining to data collection for ad targeting, getting your email for drip campaigns to remind you to return, pushing you through the sales funnel, etc. But even before the machinery of modern sophisticated surveillance capitalism, it started with every basic creator’s desire to feel seen.

For a time, identity on the web was usually pseudonymous; putting a real name online was considered unwise. Screen names sufficed for enough purposes that pressure to use genuine identity was absent. Things stayed this way until Facebook opened to the general public in 2006.

Mandatory access control

Certainly it is not uncommon for an information system to need to model the people using the system. As a useful extreme we may consider any system within the United States which contains classified information and is therefore subject to executive order 12958 issued by president Bill Clinton in 1995.

Automated information systems, including networks and telecommunications systems [require] controls to ensure that classified information is used, processed, stored, reproduced, transmitted, and destroyed under conditions that provide adequate protection and prevent access by unauthorized persons.

Clinton’s order establishes a common language for designating information as Confidential for information whose disclosure could damage national security, Secret if the damage would be “serious,” and Top Secret for concerns that are “exceptionally grave.” Moreover, a notion of ‘‘special access program’’ is defined “for a specific class of classified information that imposes safeguarding and access requirements that exceed those normally required for information at the same classification level.” Special access programs are for the real secrets, disseminated only to small groups of people with not only the requisite clearance but also signatures on all the paperwork to bring them onboard to a particular project.

Classified government systems are a useful reference point for contrast because of the extent to which their data model is preordained and their behavior is governed by rules set outside of the computer system. There is much the programmer is not free to choose. The users of a system that processes classified information are inherently part of its data model because of requirements imposed by the state. All information will be labeled with a classification, and every act of information retrieval will be associated with a specific person who performs it. It does not suffice to ask, say, is this session able to access Secret-level information; such a system has legal obligations at the level of individual users and must make every attempt to distinguish one person from another to discern whether this person may know this fact and to record what information was disseminated to whom for the sake of leak investigation.

Many computer systems share similar concerns regarding integrity and limits on dissemination. Usually these do not rise to the level of exceptionally grave damage to anything. Still, mandatory access rules may apply. In healthcare, education, and finance, specific laws govern information in terms of to whom it may (or must) be given, so we find it necessary for software’s domain to encompass not merely a system’s nominal purpose (say, keeping track of child’s grades in school) but also a roster of all the people (teachers, school administrators, parents, information system administrators) who are authorized to produce and receive the information within the software’s primary domain.

How “user” seeps in implicitly

We could choose any common web platform for this discussion, but I think Django is a fun example. Django was built at a Kansas newspaper in 2003, published openly in 2005, and spun out into a dedicated nonprofit organization in 2008. (Reportedly the paper that started Django abandoned it in 2018 in favor of Wordpress.) The Django Software Foundation enjoys playing off of this history, while promoting itself as a flexible, general-purpose web application framework. From a banner on its introduction:

Django was invented to meet fast-moving newsroom deadlines, while satisfying the tough requirements of experienced web developers.

Django appeals to those who love the “batteries-included” motto that characterizes Python, and it includes a number of things, such as an authentication system.

Userobjects are the core of the authentication system. They typically represent the people interacting with your site […].

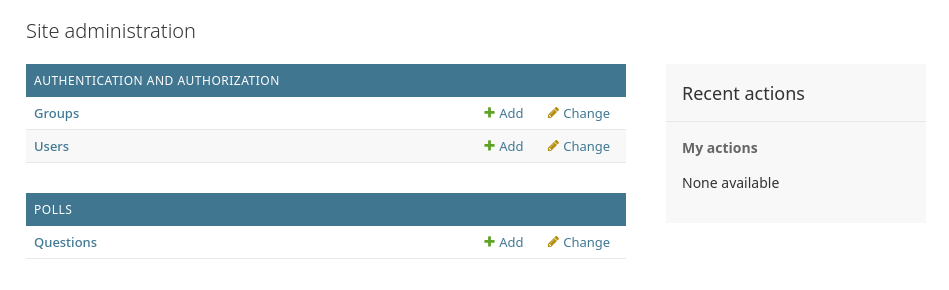

If you follow a few links from the Django home page to the introductory tutorial, on page 2 already we find users. Defining an administrating user is prerequisite to administering the application. Looking at a screenshot of the administration page, we see that users are there before we have even decided what we are building.

The tutorial explains:

Django was written in a newsroom environment, with a very clear separation between “content publishers” and the “public” site. Site managers use the system to add news stories, events, sports scores, etc., and that content is displayed on the public site. Django solves the problem of creating a unified interface for site administrators to edit content.

If we choose this platform, we’ve already decided we’re going to build something which will be, in some ways, like an online newspaper. Probably our product will not be a newspaper. But rather than start from scratch, as a Django user we start from a newspaper base and then customize it to meet our “tough requirements.” Like a renovated building, it will contain structural elements erected for the edifice’s original purpose, and in the case of Django, this bone structure includes a user model.

The user model is wrong for families

Programmers’ notions that a computer is used by a single person at a time, and that the software will always operate with knowledge of who that person is, assumes and reinforces individual isolation. Nowhere is this more evidently difficult and wrong than in family life. Tasks that used to be easily shared between family members have become much more difficult as a result.

Until the proliferation of cell phones so cheap that each children could have one of their own, a household typically had a single phone line. A caller understood that they were calling a house, not a person. We, the common users of telephony, never made a conscious decision to adopt this system, nor were we making an intentional change when we departed from it. The pattern of the single household telephone followed from the constraints of wired telephony’s mechanism. Likewise, the shift from shared to individual lines followed from the constraints of cellular telephony’s mechanism; you couldn’t sign up for a phone number that rings multiple cell phones simultaneously if you wanted to.

Much of this development seemed positive. No longer did we have to field calls that were intended for someone else, handing the phone over or taking down a note, because calls were able to be targeted more precisely. But this also means that every call now has to be targeted precisely to an individual. A school teacher that needs to talk to any of a child’s caretakers no longer has the option to phone the home; the teacher cannot place a call without selecting an individual. Generally that will be the mom, reinforcing gendered labor division via an accident of technology. Whereas a father in the 1990s might have had some ability to field the home’s calls while Mom is sick, today the system circumvents him mechanically by ringing her direct line.

Email took a turn similar to telephony. Physical mail was a somewhat communal affair, with letters arriving at a single mailbox and entering the household visibly. A letter of general interest might sit in plain view on the kitchen table. When households started to go online, it was often primarily for the purpose of using email. You got one email address from your internet service provider, and you only had one internet-connected computer in the house, and so, for a time, not much changed. In 1995, Hotmail showed up. Like the cell phone, webmail services offered the attraction of being conveniently available from anywhere, free of any tether to the home. And, like the cell phone, webmail initiated the demise of another form of household inbox. Since they’re handing out email addresses for free, there’s no reason not to grab one of your very own.

My uncle in Washington, despite a career in biotech, has always been something of a conservative technophobe at home, and to this day his nuclear family can be contacted via their 1990s email address. I found this funny and quaint for a long time, until a few years ago. I realized that nobody in my own family could assist with mundane paperwork tasks when someone needed help, because every chore involved some individually-held password or email verification. Children nearing adulthood especially need help with mundane paperwork. So we created our own family email account.

Unlike the cell phone, we still have the ability to make this choice about email, but the choice does have to be made consciously, and you are going against the grain. Google, for example, warns that their accounts are intended for use by only one person. Websites will often fight your attempts to create group accounts. They may, for example, require phone-based verification for authentication, which ties us helpless to cell infrastructure and its model of individual data silos.

Do you really need it?

Facebook’s major advantage came from convincing people it was okay to use their real name online. Before this, putting personally-identifying information online was commonly considered to be dangerous. To create a network where people would find each other, the genius of Facebook lied simply in being bold enough to tell us that common ideas about internet safety were wrong. From there, it was only a short hop to declaring that real names were mandatory. This cleared the way for every other internet service to follow suit.

OAuth delivered another devastating punch. Initially developed by Twitter and Google, the OAuth mechanism became the standard for anyone who wants to start a website but doesn’t want to deal with (or require their users to deal with) the mechanics of setting up an account. All you need to do is add a “log in with Google” button. This has implications the website owner may not have considered. If Google doesn’t want accounts to be shared by multiple people, and Google accounts are your site’s accounts too, then their account-sharing rules become your account-sharing rules. Technical convenience drives policy choice, and possibly we don’t even notice it happen.

For regulated information systems, there is a legitimate and legislated need for closely associating computerized records with the authorized individuals who access them. For Facebook and the rest of the ad industry, it is apparent enough why the business wants user identity, and this is discussed ad nauseum elsewhere.

But for plenty of other computer applications, it seems that user identity has often crept thoughtlessly into systems simply because the technology and the programmer culture has inherited the practice from tech giants. We may learn fundamentals of programming languages and algorithms from schools, but we learn to write complex software by using the coding tools and practices helpfully open-sourced by Big Tech. It seems the only thing we know how build anymore, or at least the only thing that it occurs to anyone to build using a computer anymore, is surveillance tech.

Internet-enabled services with data available from any machine anywhere are wanted, and you do need access controls to secure them. But you often don’t need the security model to be based on people.

Early pictures of what computers are good for often involved computerized versions of everyday things. The calendar hanging on the kitchen wall becomes a digital calendar that you can now view and update from anywhere, without needing to enter the kitchen. Did this vision fructify? Sort of. But the individualized nature of the Google Calendars, or whatever other systems we’ve ended up using, makes these system fail (because they did not try) to serve as strictly digitally-improved analogs to the paper calendar.

Did digital cameras emerge as strictly-better replacements for film cameras? There are some aspects that are very nice, for sure, but also the individualist data model has destroyed the locally communal accessibility of prints. Again this follows from a desire to have remotely backed-up and accessible-from-anywhere data, which implies a need for access controls. To have every photo you take immediately accessible to all of your family would probably not be desired. And yet, no prevailing substitute has emerged to suitably replace the experience of bringing home a stack of prints from the photo lab and laying them out on the table. So the overall effect is a disappearance of the presence photographs once had, in favor of the convenient and cheap approach of letting a collection of digital files, too large to sort through, fade away into the great warehouse in the cloud.

Designing computer systems to be used by groups and households, with access controls that are discretionary and based on physical proximity, may go some way toward being able to take advantage of digital representation and high-speed transmission without sacrificing the casual sharing that substantial objects once offered us without a thought. If you are a computer programmer and you are not trying to build a surveillance system, maybe it would help to look around at everyday objects that you use without the object having any awareness of who you are. Let’s get creative in figuring out how to limit the dissemination of mildly-private information without always resorting to strictly isolating people from one another. Let’s build more tools that have no idea who’s using them.

“The user” is not even real

Anyone who has tried find out how many people have actually read their blog post knows that users are an illusion, or perhaps more charitably an approximation, that programmers invent as a projection of their own desires.

The user is me lying to a website that thinks I’m my spouse.

It’s two kids passing a tablet back and forth to one another.

It’s a web scraper.

It’s a cat jumping onto the keyboard.

The thing you’re building is just a thing, and it neither controls nor sees its situation. Sometimes we forget this. Even before the AI mania started, we acted like each computer system we made was a little guy who talks to people. It’s not. It’s a thing, and it can’t see whose hands are on it. And if you are sincerely trying to make a tool for people to use however they see fit, then the tool shouldn’t be trying to look back at those people anyway.

This is not an exhortation to Big Tech companies to ask them to change their ways. That would make no sense; Google’s business is what it is, and its business is to know you. But the way these companies keep you participating in this is by providing things you will use. I propose that the nature of their business causes them to do a poor job at it. This presents an opportunity for the upstart competitor with enough imagination. Imagination is needed because following standard software best practices as articulated by and for adtech companies will only result in more services that work like adtech services. Picking up their frameworks and applying them with different intent will not produce a substantially different result.